Overview

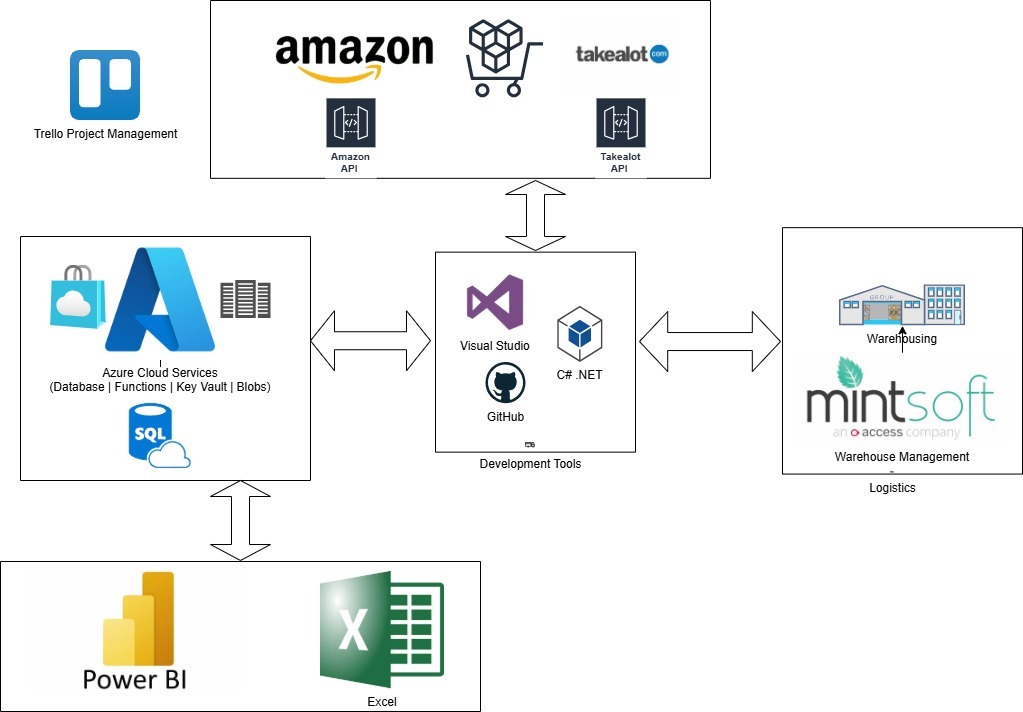

This Custom Data Mart is the foundational "Backend" project for my portfolio. By moving the logic into Azure Functions and a Kimball-based Star Schema, I have built a scalable, professional data warehouse that supports high-level BI and reporting.

Its core function was to create a centralized Azure SQL Data Warehouse utilizing Kimball Dimensional Modeling to consolidate fragmented API data from Takealot, Amazon, and MintSoft into a high-performance analytical source.

Technical Architecture

Dimensional Modeling (The Kimball Approach)

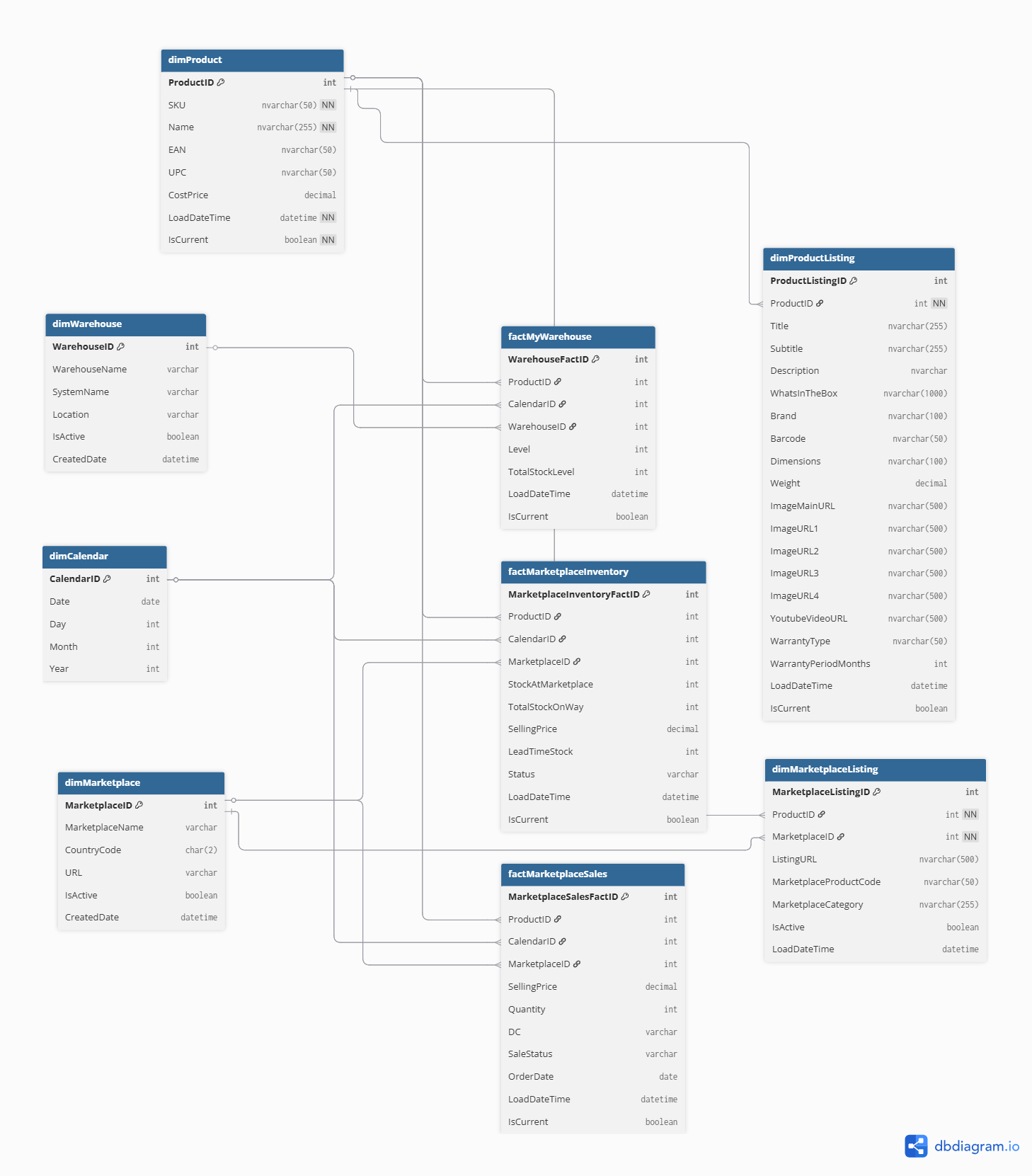

I designed a Star Schema to optimize query performance and ensure data integrity across multiple marketplaces.

- The Model: The architecture consists of three core Fact Tables (

Sales,Inventory,Warehouse) linked to shared Dimension Tables (dimProduct,dimCalendar,dimMarketplace). - SCD Implementation (Type 2): To track historical changes (such as price fluctuations or product re-naming), I implemented Slowly Changing Dimensions (SCD). Using

IsCurrentflags andLoadDateTimetimestamps, the model can reconstruct the state of the business at any historical point. - The "Golden Record" Challenge: I solved the cross-platform SKU mismatch problem by establishing

dimProductas the master reference. The C# ETL service performs a lookup on the unique SKU during ingestion, mapping disparate marketplace identifiers to a single internalProductID.

C# ETL Orchestration (Azure Functions)

The extraction logic is built as a suite of Serverless Azure Functions, ensuring high availability and cost-effective compute.

- High-Performance Extraction: I developed custom C# clients using

HttpClientandSystem.Text.Jsonto consume REST APIs. The functions are triggered by TimerTriggers (executing daily) but support ad-hoc execution via HTTP triggers. - The "Upsert" Strategy: To maintain data cleanliness, the pipeline uses a "Check-then-Insert" logic. For example: When ingesting Takalot Sales, the code queries the database to see if a sale status has changed before committing a new record, preventing duplicate entries while capturing status updates.

Enterprise-Grade Security & Monitoring

- Secrets Management: I integrated Azure Key Vault to store sensitive API keys and SQL connection strings. The C# functions retrieve these at runtime using

SecretClient, ensuring no credentials are ever hardcoded in the source control. - Observability: I developed a dual-layer monitoring system:

- Custom Metadata Logging: A dedicated

FunctionLoggertable tracks every run, recordingFunctionRunGuid, record counts, and execution duration. - Automated Reporting: The reporting module aggregates daily logs into a professional HTML status report sent via SMTP, providing an immediate overview of data health.

- Custom Metadata Logging: A dedicated

Business Impact & Value

- Historical Persistence: Unlike marketplace portals that often limit history, this Data Mart builds a permanent historical record of inventory and sales trends.

- System Agility: Created a Decoupled Architecture. The Power BI reports point to the SQL views rather than the APIs; if a marketplace changes its API structure, only the C# "wrapper" needs an update, leaving the analytical layer untouched.

- Scalability: The Azure SQL backend is designed to handle millions of rows, allowing the business to expand into new marketplaces (e.g., Makro, Loot) by simply adding a new Function module to the existing schema.

Portfolio Perspective

This project represents the transition from 'Business Intelligence' to 'Data Engineering.' By building a custom Azure backbone, I solved the most common problem in eCommerce: fragmented data. I successfully implemented enterprise patterns, Kimball modeling, serverless compute, and secure secret management. to create a robust data engine that powers analytics and decision making for the entire business.

Appendix:

Database Design & Diagram

Kimball Facts and Dimensions